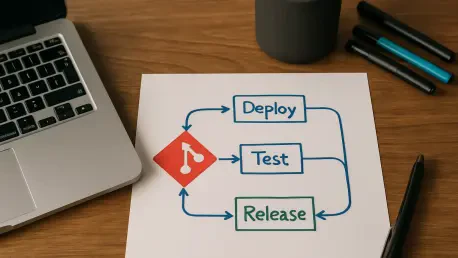

The rapid transition to cloud-native architectures has solidified GitOps as the definitive framework for continuous delivery, yet the visual reassurance of a green synchronization checkmark often masks underlying architectural instabilities. In the current 2026 landscape, tools like ArgoCD have become the operational heartbeat of Kubernetes environments, establishing Git as the undisputed source of truth for infrastructure state. This paradigm allows platform engineering teams to maintain high-velocity deployment cycles with a perceived layer of governance. However, the standard “Synced” and “Healthy” status indicators merely confirm that the live cluster state reflects the repository contents; they provide no guarantee that the content itself is safe or logically sound. This creates a dangerous complacency where syntax correctness is mistaken for operational reliability. While a manifest might be perfectly formatted YAML, it can still harbor catastrophic flaws that lead to service outages, resource exhaustion, or security breaches once applied.

Engineering Mathematical Certainty in Deployments

Proving Operational Behavior: Temporal Logic in GitOps

Traditional safety nets in platform engineering usually terminate at the boundaries of human peer review, YAML linting, and basic schema validation. While these methods excel at catching syntax errors or simple resource mismatches, they remain fundamentally incapable of managing the intricate complexities inherent in modern distributed systems. As organizations scale, the limitations of these reactive tools become apparent, particularly in high-stakes sectors like finance or healthcare where a “successful” synchronization does not necessarily equate to a successful deployment. This creates a gap known as logical drift, where the intended architectural behavior diverges from the actual logic encoded in the manifests. To solve this, teams are shifting toward formal verification by integrating temporal logic into their pipelines. This methodology moves beyond inspecting what a file looks like to mathematically proving what the infrastructure will do across various state transitions.

The application of mathematical proofs allows engineers to treat deployment manifests as a series of rigorous state changes rather than static text files. By modeling these transitions, it becomes possible to validate resource invariants, ensuring that essential cluster assets such as CPU, memory, and storage IOPS remain available across every possible scaling path. This shifts resource management from a game of estimation and trial-and-error into a guaranteed science where capacity is mathematically certain. Furthermore, temporal logic provides a way to verify that a configuration will never reach a prohibited state, such as a security violation during a scale-up event. This approach ensures that even as the environment evolves, the core safety properties of the infrastructure remain intact. By moving from simple diffing to formal modeling, platform engineers can achieve a level of predictability that traditional continuous delivery tools simply cannot provide.

Managing Complex Dependencies: The Path to Rollback Integrity

In complex microservices architectures, the sequence of operations is often more critical than the operations themselves. Formal verification provides a robust framework for mastering dependency ordering, particularly during sensitive procedures like database migrations, secret rotations, and security sidecar injections. Standard GitOps tools often struggle with these temporal dependencies, leading to race conditions where a service attempts to start before its required secrets are available or its database schema is updated. Mathematical modeling eliminates these risks by ensuring a deterministic and provably safe order of operations. This rigor transforms the deployment process into a controlled sequence of events where each step is verified against the state of the entire system. Consequently, the volatility associated with multi-service updates is significantly reduced, providing a stable foundation for the most intricate architectural requirements.

Beyond initial deployment safety, formal verification addresses the critical challenge of recovery through rollback integrity. One of the most significant risks in modern infrastructure management is the “broken” sync that leaves a cluster in an unrecoverable or corrupted state. Verification checks confirm whether a system can return to a stable baseline from any point of failure without requiring manual intervention or risking data loss. By validating every intermediate state, engineers ensure that the path back to a known good configuration is always open and mathematically sound. This level of oversight prevents scenarios where a partial failure leaves the infrastructure in a “zombie” state that is neither functional nor easily revertible. This shift toward provable recovery safety ensures that even if a synchronization is interrupted, the platform remains resilient and capable of restoring service automatically, maintaining high availability for end users.

Validating Resilience through Empirical Data

Uncovering Hidden Vulnerabilities: Lessons from Production

The practical necessity of shifting to a mathematically grounded gatekeeper is clearly evidenced by findings in large-scale production environments throughout 2026. A comprehensive study of 850 Kubernetes applications across multiple enterprise platforms revealed that formal verification identified hundreds of manifest violations that had successfully bypassed every other layer of defense. These issues were not mere formatting errors or linting failures; they were deep logical flaws that could only be detected through state-based modeling. For instance, the verification process uncovered circular service mesh dependencies that would have caused traffic loops and total service collapse under load. These vulnerabilities existed within manifests that were otherwise valid and synchronized perfectly according to standard tools, proving that a green checkmark is often a false indicator of security.

Furthermore, the integration of formal verification allowed these organizations to identify resource limits that were destined to trigger silent Out-of-Memory kills during peak traffic periods. In many cases, these limits appeared reasonable in isolation but were mathematically proven to be insufficient when considering the scaling patterns of the entire cluster. By catching these issues before they reached production, the verification layer acted as an impenetrable barrier against systemic instability. The data suggests that without this rigorous mathematical oversight, nearly thirty percent of the analyzed applications would have suffered from preventable outages caused by logical inconsistencies. This highlights the vital role of formal proofs in modern delivery pipelines, serving as a final check that ensures the infrastructure is not just synchronized, but is genuinely capable of supporting the intended operational load.

Eradicating the Successful Failure: The Future of Stability

Implementing a rigorous mathematical verification layer effectively eliminated the “successful failure” phenomenon, a scenario where synchronization tools report a healthy status while the application is actively degrading. By 2026, the complexity of cloud-native systems has made reliance on human-in-the-loop reviews an obsolete strategy for ensuring reliability. Organizations that adopted formal verification saw a 94.3% reduction in potential deployment drift incidents, as the tools provided immediate feedback on the long-term consequences of configuration changes. This proactive approach moved the industry toward a standard where configuration correctness was no longer a matter of visual observation, but a matter of mathematical certainty. The result was a significantly more stable deployment pipeline that allowed developers to focus on feature delivery rather than troubleshooting elusive production errors.

The transition toward formal verification in GitOps required a fundamental shift in how platform teams viewed infrastructure code. It was no longer sufficient to merely match a repository to a cluster; the focus shifted toward ensuring that the resulting state was unconditionally stable under any operational demand. Engineers started utilizing automated theorem provers to validate their architectures, treating infrastructure with the same discipline applied to high-integrity software in aerospace or medical fields. This evolution ensured that the green checkmark finally represented what it always promised: a provably secure, operational, and resilient environment. Moving forward, the industry prioritized the development of standardized verification models that integrated seamlessly with existing continuous delivery workflows. This strategy empowered organizations to scale their infrastructure with complete confidence, knowing that every change was backed by the uncompromising laws of mathematics.