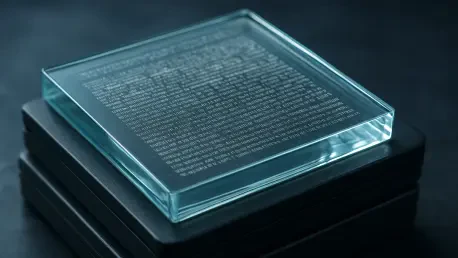

The fragility of modern digital infrastructure remains a silent crisis as the global community generates more data in a single day than was produced in entire decades of the previous century. Current storage solutions, ranging from high-performance solid-state drives to massive magnetic tape libraries, are fundamentally temporary, typically requiring replacement or data migration every five to twenty years to avoid catastrophic bit rot. This relentless cycle of hardware refresh creates a massive financial and environmental burden for data centers, which must constantly move petabytes of information to newer media before the old ones fail. Microsoft’s Project Silica introduces a radical departure from this transient approach by utilizing a medium that has already proven its longevity over millenniglass. By encoding data within the molecular structure of high-durability glass, the technology promises a “write once, read forever” model that could effectively end the era of digital obsolescence and ensure that the collective knowledge of humanity remains accessible for the next ten thousand years.

Engineering the Eternal Archive

Molecular Precision: The Science of Phase Voxels

The technical foundation of this storage breakthrough lies in the use of femtosecond lasers, which emit ultra-short light pulses to create microscopic distortions within a glass slab. Unlike traditional optical media like CDs or DVDs that store data on the surface, Project Silica embeds information deep within the bulk of the material in three-dimensional structures known as voxels. In the most recent iterations of this technology, researchers have moved toward a “phase voxel” approach, which significantly increases the speed of the writing process. Instead of needing multiple laser passes to define a single bit of information, a single pulse can now create a distinct physical change in the glass that encodes multiple bits through variations in orientation and depth. This level of precision ensures that the data is not merely recorded but is physically integrated into the glass lattice, making it immune to the magnetic fields that routinely wipe traditional hard drives or the chemical degradation that eventually renders archival film and tape unreadable.

Beyond the initial recording, the durability of the medium is tested against extreme environmental stressors that would instantly destroy conventional hardware. Because the data is etched into a solid block of glass, it remains stable even when subjected to boiling water, high-pressure washing, or intense heat in ovens. The material properties of the glass provide an inherent shield against electromagnetic pulses, which are a major concern for government and military archives. This physical robustness eliminates the need for the energy-intensive climate-controlled environments that modern data centers require to prevent hardware failure. By removing the necessity for constant cooling and humidity regulation, glass-based storage offers a path toward a much more sustainable digital footprint. The transition from delicate magnetic surfaces to a solid, inert material represents a fundamental shift in how engineers conceptualize data safety, moving away from active maintenance toward passive, structural preservation that requires no power to maintain.

Material Innovation: From Fused Silica to Borosilicate

A critical evolution in the development of Project Silica is the shift from expensive, specialized fused silica to more accessible borosilicate glass. While fused silica provided the initial proof of concept due to its high purity, its manufacturing cost made it difficult to scale for wide commercial adoption. Borosilicate glass, widely recognized for its use in high-quality kitchenware and laboratory equipment, offers a much more favorable balance between durability and cost-effectiveness. This material transition is particularly vital for small and medium-sized enterprises that require long-term data retention but lack the massive capital budgets of global tech giants. By utilizing a material that is already mass-produced at scale, Microsoft has lowered the barrier to entry, making it feasible for a broader range of industries to adopt permanent storage solutions. The use of borosilicate also ensures that the storage slabs are resistant to thermal shock, meaning they can survive rapid temperature fluctuations without cracking or losing the integrity of the stored voxels.

This shift in material science is accompanied by a radical simplification of the hardware required to read the stored data. Early versions of the system relied on complex, high-resolution microscopes that were difficult to calibrate and maintain outside of a laboratory setting. Current advancements have introduced a streamlined optical reading system that uses computer-controlled cameras and polarized light to decode the voxels at high speeds. This improvement in throughput is essential for making glass storage a viable alternative to the automated tape libraries currently used by major cloud providers. By reducing the complexity of both the storage medium and the retrieval hardware, the technology moves closer to a plug-and-play reality for archival departments. The focus is no longer just on how long the data can last, but on how efficiently it can be accessed when needed. This dual focus on material affordability and mechanical simplicity positions borosilicate glass as the leading candidate for the next generation of standardized, global archival infrastructure.

Implementation and Regulatory Challenges

Cultural Preservation: Practical Use Cases for Permanent Media

The immediate value of glass storage is most evident in sectors where data loss is not an option, such as healthcare, legal services, and cultural heritage. For example, medical institutions are often required to keep patient records for decades, a task that currently involves expensive and risky migrations between server generations. Project Silica allows these organizations to create a permanent physical record of genomic data or long-term clinical trials that will never need to be “refreshed.” Similarly, the entertainment industry has begun to see the potential for glass as a master format for film and music. Large-scale demonstrations involving the storage of iconic films like “Superman” have proven that high-definition content can be preserved without the risk of vinegar syndrome or color fading that plagues physical film stock. This provides a bridge between the digital and physical worlds, offering the density of modern digital files with the permanence of a physical artifact that does not rely on a specific proprietary software environment to exist.

For smaller businesses, the primary advantage lies in the significant reduction of long-term operational costs associated with data archiving. Most companies currently pay a recurring “data tax” in the form of cloud storage subscriptions or the labor costs of managing local backups and hardware rotations. With glass storage, the economic model shifts from a recurring expense to a one-time capital investment. Once a company writes its core intellectual property, legal contracts, or historical financial records to a glass slab, those assets are protected indefinitely without further intervention. This “set it and forget it” capability is revolutionary for organizations that need to maintain deep archives but do not have the IT staff to manage complex data lifecycle policies. By providing a medium that survives for ten thousand years, Microsoft is essentially offering a way to opt out of the hardware replacement cycle, allowing businesses to focus their resources on innovation rather than basic data survival and maintenance.

Regulatory Friction: Reconciling Permanence with Privacy

The very permanence that makes Project Silica so attractive also introduces significant challenges when viewed through the lens of modern data privacy regulations. Laws like the General Data Protection Regulation (GDPR) often grant individuals the “right to be forgotten,” which requires companies to permanently delete specific personal data upon request. On a storage medium designed to be physically immutable and indestructible, the traditional method of “deleting” a file by overwriting it or erasing a magnetic sector is technically impossible. This creates a legal and technical paradox: how can a company comply with a mandate for data destruction on a medium that was engineered specifically to prevent it? This conflict may necessitate the development of new logical layers of encryption, where the data remains on the glass but the decryption keys are destroyed, effectively rendering the information inaccessible. However, whether such “cryptographic erasure” will satisfy future regulatory bodies remains a subject of intense debate among legal experts.

Furthermore, the adoption of glass storage requires a complete overhaul of industry standards for data centers and archival workflows. Current systems are built around the assumption that storage is a temporary commodity, with software and hardware designed for high-frequency writes and deletes. Integrating a “write once” medium like glass into these existing ecosystems requires new middleware and specialized hardware for both the laser-etching process and the high-speed optical reading. Organizations will need to carefully categorize their data to distinguish between “hot” data that changes frequently and “cold” data that is suitable for permanent glass storage. This categorization process, combined with the initial cost of specialized reading and writing equipment, means that the transition will be a strategic, multi-phase undertaking rather than an overnight swap. As the technology moves toward full commercialization, the focus was on creating standardized formats that allow different systems to interact with the glass media, ensuring that the data remains readable even as the companies that created the hardware evolve or disappear.

The transition to glass-based storage represented a fundamental shift in how society perceives the longevity of information, moving beyond the limitations of temporary digital media. To successfully integrate this technology, organizations should begin by auditing their existing archival workflows to identify high-value, “cold” data that would benefit most from physical permanence. This includes core intellectual property, legal documentation, and historical records that are currently subject to the risks and costs of frequent migration. While the initial investment in specialized hardware may be significant, the long-term reduction in energy consumption and hardware refresh cycles offered a clear path toward both financial and environmental sustainability. Future considerations must prioritize the development of robust cryptographic key management systems to address privacy regulations, ensuring that the “right to be forgotten” can be honored even on an indestructible medium. By treating data storage as a permanent architectural decision rather than a recurring operational task, businesses were able to safeguard their digital legacy for the coming millennia.