As global enterprises integrate generative artificial intelligence into every layer of their operations, the ability to demonstrate absolute control over sensitive data has transitioned from a legal niche to a fundamental business necessity. Business leaders today are navigating a landscape where

The global business landscape has undergone a radical transformation where digital infrastructure is no longer just a support function but the very foundation upon which modern commerce is built. Microsoft Azure has successfully positioned itself at the center of this revolution, evolving from a

The strategic renewal of the multi-year partnership between the London Stock Exchange Group and Broadcom signifies a pivotal moment for the stability of global capital markets as they transition to more specialized infrastructure. As the financial sector faces unprecedented pressure to modernize

The calculated transformation of a consumer-focused hardware provider into the ultimate architect of the generative intelligence era demonstrates the profound long-term impact of strategic enterprise acquisitions. By tracing the evolution from the landmark EMC acquisition in 2016 to the current

The technological landscape of current enterprise operations is defined by the rapid rise of "agentic AI," where autonomous systems perform complex tasks with very little human oversight. This shift has brought a major economic challenge to center stage: "tokenomics." Originally a term from the

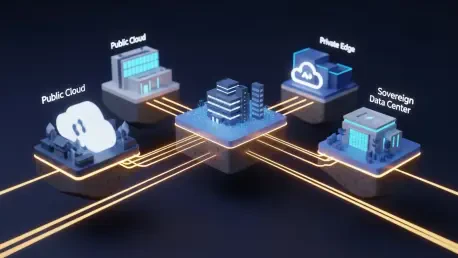

The era of blind, unchecked migration toward hyperscale environments has reached a definitive turning point as businesses prioritize functional utility over the allure of total outsourcing. For over a decade, the dominant narrative in enterprise technology was a relentless, one-way journey toward

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47