The global transition toward autonomous digital infrastructure has reached a critical juncture with the introduction of a processor specifically designed to govern the chaotic intelligence of modern data centers. This development signals a profound strategic pivot for Arm, moving beyond its legacy as a provider of architectural blueprints to become a formidable hardware competitor. By launching a dedicated Artificial General Intelligence (AGI) CPU, the firm is addressing a fundamental shift in the global semiconductor landscape. Rather than competing directly for the raw floating-point performance of graphics processing units, this new hardware targets the sophisticated management required to coordinate massive clusters of specialized accelerators.

This move underscores the persistent relevance of the technology sector in the United Kingdom as a hub for deep tech innovation. While the industry has been captivated by the sheer power of GPUs, the reality of the 2026 data center environment demands a more nuanced approach to sequential processing. As the ecosystem for AI agents expands, the need for a central “orchestrator” becomes paramount. These agents do not merely crunch numbers; they require logical reasoning, task prioritization, and complex scheduling. Arm is positioning its silicon as the necessary brain that directs the muscle of third-party accelerators, ensuring that the disparate components of a gigawatt-scale facility work in a synchronized manner.

Emerging Paradigms in Data Center Efficiency and Market Dynamics

Trends in Specialized Silicon and the Rise of AI Orchestration

The industry is currently witnessing a decisive transition from general-purpose GPUs toward application-specific integrated circuits (ASICs) tailored for distinct neural network architectures. Hyperscalers such as Google, Meta, and Microsoft are no longer content with off-the-shelf solutions; they are reshaping hardware demands to suit their internal software stacks. This shift has created a vacuum in the orchestration layer, where traditional server CPUs often struggle to keep pace with the rapid-fire demands of agentic workloads. The necessity for sophisticated pace-keeping has never been higher, as these autonomous agents require a high degree of interaction between memory, compute, and networking.

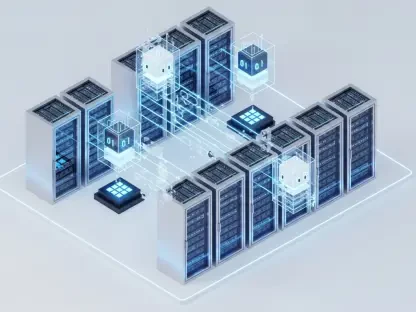

To counter the threat of proprietary ecosystem lock-in, the market is moving toward open standards like Compute Express Link (CXL) 3.0. This technology allows for the disaggregation of memory and compute, enabling a more fluid distribution of resources across the data center. By adopting these standards, Arm ensures that its AGI CPU remains compatible with a wide array of third-party accelerators. This flexibility is essential for enterprise customers who want to avoid being tethered to a single hardware vendor. Consequently, the orchestration of these complex systems is becoming the new battlefield for semiconductor dominance, with efficiency and interoperability serving as the primary metrics for success.

Financial Projections and the Expanding Horizon of AI Compute

Economic forecasts for the Arm AGI CPU are aggressive, with an annual revenue target of $15 billion anticipated within a few years of full-scale deployment. This growth trajectory is supported by deep-rooted partnerships with industry titans like Meta, OpenAI, and Cloudflare. These organizations are searching for hardware that can handle the massive throughput required for generative AI while maintaining strict power efficiency. The performance indicators for this new silicon are impressive, featuring 136 Neoverse V3 cores designed to operate within high-density liquid-cooled rack configurations. These setups allow for an unprecedented amount of compute power to be packed into a smaller physical footprint.

Market adoption is expected to span across diverse sectors, including enterprise software, telecommunications, and sovereign generative AI projects. As telecommunications providers transition to 6G and advanced edge computing, the demand for localized, efficient AI orchestration will surge. This broad applicability provides a stable foundation for Arm’s financial ambitions. Furthermore, the shift toward high-density 200kW racks demonstrates a move away from traditional air-cooled facilities, signaling a new era of infrastructure design. As these technologies mature between 2026 and 2030, the revenue streams associated with the orchestration layer are likely to become as significant as those for the accelerators themselves.

Overcoming the Bottlenecks of Scale and Computational Variability

The management of computational variability remains one of the most significant hurdles in modern data center operations. Deterministic performance design is the cornerstone of the AGI CPU, specifically addressing the “peaks and troughs” typically associated with multithreading. By ensuring that every core provides a predictable level of throughput, Arm allows data center operators to schedule workloads with extreme precision. This is particularly vital for real-time AI applications where latency spikes can degrade the user experience or cause synchronization errors across distributed systems.

Strategies for managing extreme power density are also at the forefront of this hardware evolution. In an environment where a single rack can consume 200kW, the complexity of the orchestration layer increases exponentially. Coordinating power delivery, cooling cycles, and workload distribution at this scale requires a level of integration that general-purpose processors were never designed to handle. Arm is navigating this competitive pressure from vertically integrated silicon providers by offering a platform that balances performance with thermal management. By solving the orchestration layer complexity in gigawatt-scale operations, the firm is setting a new standard for how massive digital infrastructures should be governed.

The Regulatory Environment and National Tech Sovereignty

As high-end semiconductor design becomes a matter of national importance, the impact of UK tax contributions and local investment from the Cambridge hub cannot be overstated. Arm remains a vital asset for the United Kingdom, providing thousands of high-skilled jobs and anchoring a wider ecosystem of deep tech startups. However, the implications of international ownership and US-based listings continue to spark debate regarding national tech sovereignty. Policymakers are increasingly focused on ensuring that critical technology assets remain secure and that the intellectual property generated within their borders continues to benefit the local economy.

Compliance with global standards for energy efficiency and hardware safety is another regulatory pillar shaping the industry. Governments are introducing stricter mandates for data center energy consumption, forcing hardware providers to innovate at the architectural level. Security considerations also play a major role, especially in the orchestration of autonomous AI agents that handle sensitive enterprise data. The AGI CPU incorporates hardware-level security features designed to protect against unauthorized access and data leakage. This focus on “secure orchestration” is becoming a key selling point for national governments looking to build sovereign AI infrastructure that is both powerful and resilient against external threats.

The Future of Sovereign AI and Autonomous Digital Infrastructure

The role of high-end semiconductor design is evolving as national governments seek to establish their own autonomous digital infrastructure. Sovereign AI is no longer a theoretical concept; it is a strategic requirement for nations that wish to maintain data independence. The integration of AGI CPUs in global edge networks and hyperscale facilities will allow for localized processing that respects national data boundaries. This shift creates a massive opportunity for Arm to supply the foundational technology for these government-led initiatives. The ability to provide “design brilliance” ensures that the firm remains relevant in a world that is moving beyond the mobile computing era.

Anticipating the future, the convergence of high-end CPUs and global edge networks will redefine how we interact with digital services. Autonomous agents will reside closer to the user, requiring a new class of compute that is both powerful and incredibly efficient. The AGI CPU is designed to meet these requirements, providing the necessary logical framework for edge-based intelligence. As global enterprises and national governments invest in these next-generation networks, the demand for specialized orchestration will only intensify. This landscape offers a clear path for Arm to maintain its influence, serving as the primary architect for the autonomous digital infrastructure that will power the next decade of innovation.

Summary of Arm’s Strategic Trajectory in the AI Era

The emergence of the CPU as the logical brain of the data center represented a significant shift in architectural philosophy during this period. Industry stakeholders observed that while GPUs provided the necessary brawn for training models, the AGI CPU offered the coordination required for complex inference and agentic logic. This strategic maneuver allowed Arm to avoid a direct confrontation with established accelerator giants while becoming an indispensable partner in their deployment. Investors realized that the orchestration market was not just a niche, but a critical component of the entire AI infrastructure stack that offered long-term stability and growth.

The resilience of the tech ecosystem in the United Kingdom was further solidified through this high-end silicon innovation, proving that localized engineering could drive global trends. Industry analysts recommended that stakeholders focus on the integration of these orchestration layers into broader enterprise workflows to maximize efficiency. The AGI CPU successfully addressed the challenges of power density and deterministic performance, setting a benchmark for future hardware developments. Ultimately, the transition toward a specialized orchestration model provided the necessary framework for the next era of autonomous digital systems, ensuring that the underlying infrastructure remained robust, secure, and capable of supporting the burgeoning world of artificial general intelligence.