Embracing a New Era of Corporate Intelligence and Autonomy

The unprecedented pace of generative model proliferation has fundamentally shifted the internal calculus for enterprise technology officers who must now decide whether to rent intelligence or own it entirely. The modern business environment is currently navigating a significant debate regarding the adoption of open-source Artificial Intelligence (AI) versus proprietary, closed-loop systems. As organizations strive for digital transformation, the choice between these two pathways has become a cornerstone of long-term strategic planning. Choosing an AI strategy is no longer just a technical decision; it is a foundational move that dictates how a company will handle its data, manage its costs, and maintain its competitive edge.

This analysis aims to explore the nuances of “openness” in AI, the strategic benefits of control and sovereignty, and the operational shifts required to leverage these tools effectively. By the end of this analysis, leaders will have a clearer understanding of why moving toward open architectures is becoming a prerequisite for resilient business growth. The market currently favors those who can balance the convenience of managed services with the robust security of localized, inspectable codebases. The shift is not merely about cost reduction but about ensuring that the intellectual property of a firm remains within its own digital perimeter.

The Evolution of Openness from Software to Artificial Intelligence

To understand the current state of AI, one must look at the historical context of open-source software. For over three decades, the Open Source Definition (OSD) has provided a framework that allowed technologies like Linux and Apache to revolutionize the internet by offering transparency and collaborative development. However, AI is a multi-layered technology that does not fit neatly into these historical categories, leading to a “definitional crisis.” Past developments in the software world emphasize that true openness requires access to the underlying code; in AI, this translates to models, weights, and training data.

Understanding this shift is vital because the industry is currently moving away from “black box” proprietary systems toward a landscape where transparency is the primary driver of trust and innovation. The historical reliance on a handful of large-scale vendors is giving way to a more fragmented and customized approach. This transformation reflects a broader desire for transparency, where the ability to audit an algorithm is as important as the output it generates. As the underlying mathematics of these models becomes more commoditized, the value has shifted toward the specific data and the governance structures that surround it.

Navigating the Strategic and Operational Landscape of Open AI

Distinguishing Genuine Open Source from Proprietary Restrictions

One of the primary challenges in the current discourse is the ambiguity surrounding terminology, often referred to by experts as “open washing.” Many models marketed as open are actually “open weight” models, meaning users receive the parameters but are denied access to the training data or the specific methodology used to create them. For example, while certain high-profile model series are discussed in the context of open innovation, they often include commercial restrictions that prevent them from being truly open in the traditional sense.

For a business, this distinction is critical because the primary value of true openness—auditability and independent verification—is only fully realized when the entire stack is transparent. Analyzing these nuances allows firms to avoid the pitfalls of “semi-open” systems that may still limit their long-term flexibility. A company that builds its infrastructure on a model with restrictive licenses may find itself unable to pivot when those licenses are updated. Therefore, evaluating the licensing fine print is now a mandatory step for legal and technical teams alike.

Securing Digital Sovereignty and Avoiding Vendor Lock-in

The business case for open-source AI is building rapidly around the concept of organizational autonomy. Relying solely on proprietary Application Programming Interfaces (APIs) provides an easy entry point but creates “compounding dependencies,” such as unpredictable pricing and opaque data handling. Enterprises are increasingly wary of a “reckoning” similar to the vendor lock-in experienced during the early cloud migrations. By adopting open models, companies can maintain full ownership of their models and training data, which is especially vital in regulated sectors like finance and healthcare.

In these industries, digital sovereignty is not merely a preference but a requirement for compliance and long-term stability, ensuring that a business is never held hostage by a single provider’s shifting terms. Furthermore, maintaining local control over AI assets allows for more predictable budgeting and operational continuity during potential service outages. When an organization hosts its own weights, the risk of a third-party deprecating a model overnight is eliminated. This stability provides a significant advantage for mission-critical applications that require years of consistent performance.

Optimizing Performance Through Specialized and Task-Specific Models

A common misconception is that open-source AI cannot compete with the massive “frontier” models developed by tech giants. However, industry trends suggest that for most enterprise applications, specificity beats scale. While proprietary models lead on general reasoning benchmarks, they are often overkill for specific corporate workflows. A fine-tuned open model, tailored to a particular internal dataset, can frequently outperform a general-purpose model in terms of speed, accuracy, and cost-predictability.

By looking past public leaderboards and focusing on internal metrics, businesses can address misconceptions about “smaller” models and leverage them for mission-critical platforms where precision and efficiency are more valuable than broad, general intelligence. These specialized models also consume significantly fewer computational resources, leading to lower energy costs and a smaller carbon footprint. The ability to prune and quantize open models further enables them to run on edge devices, bringing intelligence closer to the point of data collection and reducing latency for real-time applications.

Anticipating the Future of Hybrid AI Ecosystems

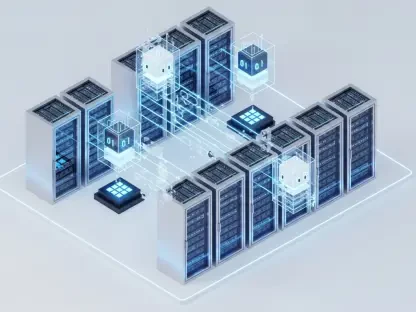

Looking forward, the industry is moving toward a hybrid ecosystem where proprietary and open-source tools coexist. Emerging trends suggest that while proprietary APIs will remain the standard for general “daily assistants,” open-source models will serve as the backbone for high-stakes, internal transformation projects. Significant technological and regulatory changes will favor models offering higher levels of transparency and local deployment capabilities. Experts predict that the ability to modify and inspect AI will become a standard requirement for corporate governance.

This shift will likely reward organizations that build “constitutional” governance structures today, preserving their optionality as the market consolidates and matures. The convergence of decentralized computing and open-source intelligence will likely lead to models that are not only more secure but also more ethically aligned with specific organizational values. As global regulations tighten around algorithmic accountability, having a transparent model that can be explained to regulators will become a significant competitive advantage. This hybrid approach allows for rapid experimentation with cloud-based tools while protecting core assets through private, open-source deployments.

Practical Strategies for Implementing Open Source AI

To successfully transition to an open-source strategy, businesses must move from being mere consumers to becoming active operators. This requires a focus on actionable best practices, starting with the execution of pilot projects to test performance and understand true operational costs. Organizations should prioritize building flexible architectures that do not lock them into a single ecosystem, allowing them to swap models as technology evolves. Furthermore, it is essential to invest in human capital—specifically in Machine Learning Operations (MLOps)—to manage the governance, maintenance, and security of deployed models.

By treating AI as a core competency rather than an external service, firms can ensure their systems remain secure, compliant, and highly tailored to their specific needs. This involves establishing rigorous model evaluation frameworks to detect bias and monitor performance over time. Collaboration with the broader open-source community can also provide access to cutting-edge security patches and optimizations that a single company could not develop on its own. Ultimately, the successful implementation of an open-source strategy depends on a culture of continuous learning and a willingness to contribute back to the technological commons.

Final Reflections on the Value of Open AI Strategy

In summary, the choice to integrate open-source AI into a business strategy represented a move toward freedom, control, and long-term resilience. The analysis showed how navigating the complexities of “open washing,” securing digital sovereignty, and prioritizing task-specific performance created a significant competitive advantage. This topic remained significant because, as AI became further embedded in every layer of the enterprise, the ability to inspect and own that technology defined the leaders of the next industrial era.

Businesses that established a sovereign AI capability were best positioned to innovate without permission and scale without limitations. The strategy favored those who moved beyond the simplicity of proprietary APIs to embrace the complexity of local deployments and fine-tuned architectures. Leaders were encouraged to formalize their AI governance frameworks and begin the process of internalizing their most valuable models. By prioritizing transparency and autonomy, organizations ensured that their digital future remained under their direct control, rather than subject to the whims of a volatile marketplace. Moving forward, the focus shifted from mere adoption to the mastery of these powerful, open-source assets.