What happens when the insatiable hunger for data in AI-driven environments outpaces the very infrastructure meant to support it? Data centers worldwide are grappling with this challenge as AI workloads skyrocket, demanding unprecedented speed and scalability. Enter FS with a groundbreaking solution—a 200G networking switch designed to revolutionize AI data centers. This cutting-edge hardware promises to tackle the bottlenecks of modern networking, ensuring that enterprises can keep up with the relentless pace of technological advancement.

The significance of this launch cannot be overstated. As AI applications permeate every sector, from healthcare to finance, the backbone of these operations must evolve to handle massive data volumes without faltering. This new switch, known as the N8550-24CD8D, stands as a beacon of innovation, addressing the urgent need for high-speed, adaptable infrastructure. It’s not just a piece of equipment; it’s a strategic asset for organizations aiming to stay ahead in a data-driven world.

Redefining Speed: The Urgency of 200G in AI Infrastructure

The explosion of AI technologies has created a perfect storm for data centers, where traditional networking solutions are buckling under pressure. With data traffic expected to grow exponentially from 2025 to 2027, the shift from 100G to 200G and even 400G architectures is no longer optional—it’s imperative. This switch arrives at a critical juncture, offering a lifeline to enterprises struggling with latency and packet loss in high-stakes environments.

Beyond mere speed, the broader implications for AI data centers are profound. Hybrid infrastructures, often caught in transition between older and newer systems, require solutions that bridge these gaps without massive overhauls. The introduction of a 200G switch by FS signals a shift toward future-proofing networks, ensuring that AI-driven operations can scale seamlessly as demands intensify.

Unpacking the Powerhouse: Key Features of the N8550-24CD8D

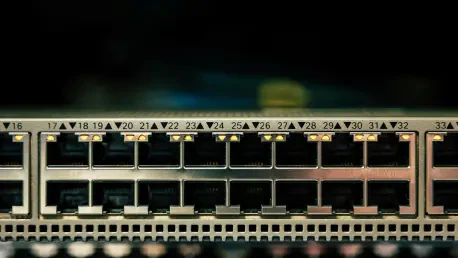

At the heart of this innovation lies a robust design tailored for high-density AI environments. The N8550-24CD8D boasts 24 200G ports, each configurable into two 100G or four 50G connections, providing unmatched flexibility for diverse equipment setups. Additionally, eight 400G uplinks enable seamless integration with core spine layers, paving the way for a gradual transition to next-gen architectures.

Further enhancing its appeal, the switch supports advanced protocols like EVPN-VXLAN for extending Layer 2 networks over Layer 3 setups, a boon for cloud-based systems. Multi-Chassis Link Aggregation (MLAG) allows multiple switches to operate as a single unit, boosting performance and simplifying management. Powered by the Broadcom Trident 4 chip with substantial data buffers, it ensures rapid, reliable data transfer even under intense workloads.

Perhaps most notably, its role as an aggregation leaf in AI storage networks stands out. By leveraging technologies such as RoCEv2, Priority Flow Control (PFC), and Explicit Congestion Notification (ECN), it minimizes latency for server-storage communications. This makes it an indispensable tool for organizations managing complex, data-intensive AI applications.

Industry Echoes: The Demand for Scalable Solutions

Across the tech landscape, experts are sounding the alarm on the need for scalable networking to support AI’s rapid expansion. Studies indicate that data center traffic related to AI workloads has surged by over 60% in recent years, a trend that shows no signs of slowing. FS’s latest offering aligns perfectly with this reality, reflecting a deep understanding of the balance between cutting-edge performance and cost efficiency.

Feedback from industry insiders reinforces this perspective. A data center architect, speaking anonymously, noted that switches like the N8550-24CD8D are critical for managing the complexity of modern infrastructures without breaking budgets. This sentiment underscores a broader consensus: adaptability and scalability are no longer luxuries but necessities in the race to keep pace with AI advancements.

Real-World Impact: Transforming Data Centers with Practical Integration

For organizations eager to harness this technology, the path to integration is clear and actionable. Assessing current network capacity is the first step—determining whether splitting 200G ports into smaller configurations matches existing equipment can optimize compatibility. This approach ensures that the switch slots into diverse setups without requiring immediate, costly replacements.

Planning for long-term growth is equally vital. Utilizing the eight 400G uplinks to connect with spine layers facilitates a phased upgrade to full 400G infrastructure, reducing disruption. Moreover, configuring the switch to support low-latency AI workloads through protocols like RoCEv2 can significantly enhance server-storage efficiency, a critical factor for data-heavy operations.

Cost considerations also play a pivotal role. Compatibility with other FS products allows for incremental upgrades, spreading out capital expenditure over time. This strategic deployment not only maximizes the switch’s potential but also builds a resilient network foundation tailored to the unique demands of AI-driven data centers.

A Milestone Achieved: Reflecting on a Networking Leap

Looking back, the unveiling of the N8550-24CD8D marked a pivotal moment for AI data centers, setting a new standard for speed and adaptability. It addressed the pressing challenges of data overload with a solution that balanced innovation with practicality. Enterprises that adopted this technology gained a competitive edge, navigating the complexities of hybrid infrastructures with newfound ease.

The journey didn’t end there, though. For those yet to embrace such advancements, the next steps involved a thorough evaluation of network needs and a commitment to scalable upgrades. Exploring partnerships with solution providers like FS offered a way to stay ahead of evolving demands. Ultimately, this launch served as a reminder that investing in robust infrastructure was not just a choice but a necessity for thriving in an AI-dominated landscape.