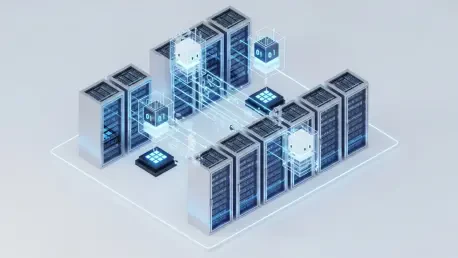

The rapid proliferation of autonomous artificial intelligence agents within corporate infrastructures has fundamentally dismantled the traditional boundaries of network security by introducing entities that act with the agency of human employees while maintaining the processing speed of silicon. This shift forces a reconsideration of what it means to secure an enterprise. For decades, security professionals relied on deterministic models where code behaved in predictable ways, allowing for the creation of static rules and signature-based defenses. However, modern AI operates on a probabilistic basis, meaning its outputs are not always consistent even when given the same inputs. This unpredictability creates a significant blind spot for legacy systems designed to catch explicit deviations from a set baseline. As these digital coworkers gain deeper access to sensitive internal datasets to perform their tasks, they effectively expand the attack surface to a degree that was previously unimaginable, necessitating a move toward more fluid and context-aware governance strategies.

The Duality of Artificial Intelligence Assets

Navigating the Shift From Deterministic to Probabilistic Risks

The core challenge facing modern cybersecurity teams lies in the dual nature of artificial intelligence, which functions simultaneously as a predictable machine and an erratic, human-like entity. In the current landscape of 2026, IT departments manage AI as a deterministic asset regarding its compute and storage requirements, yet the actual decision-making processes of large language models remain probabilistic. This means that while a server’s physical health can be monitored with traditional tools, the “logic” of an AI agent might drift or produce unexpected results that bypass conventional security filters. When an AI tool is integrated into a workflow, it does not just execute a script; it interprets data and makes choices. This transition from rigid code to fluid reasoning requires security frameworks that can account for ambiguity. Organizations that fail to bridge this gap find themselves vulnerable to prompt injection attacks or data leakage scenarios where the AI inadvertently shares confidential information because it misunderstood the security context of a user request.

Acceleration of the Exploitation Lifecycle in the AI Era

Another critical factor in the evolving threat landscape is the unprecedented speed at which vulnerabilities are now identified and exploited by malicious actors. In previous years, the window between the discovery of a software flaw and its active exploitation might have spanned weeks, but AI has compressed this timeline into mere minutes. Bad actors utilize automated systems to scan for weaknesses and generate custom exploits at a scale that human defenders cannot match without their own intelligent automation. Furthermore, the proliferation of AI agents with deep access to internal corporate data has increased the number of potential entry points for hackers. These agents often operate with high-level permissions to fulfill their roles as digital coworkers, making them prime targets for lateral movement within a network. This acceleration demands that security teams move beyond simple detection and focus on pre-emptive hardening of the AI environment, ensuring that these powerful tools do not become the very backdoors that compromise the entire organizational integrity.

Integrating Digital Coworkers Into Security Frameworks

Implementing Rigorous Identity Management for Autonomous Agents

As enterprises increasingly rely on AI agents to handle complex administrative and technical tasks, a growing consensus suggests that these entities must be treated with the same security rigor as human employees. This involves applying comprehensive identity and access management protocols to every digital coworker, ensuring that their permissions are limited to the absolute minimum required for their specific functions. In the professional environment of 2026, simply granting an AI tool broad access to a cloud environment is considered a major security failure. Instead, organizations are beginning to implement non-human identity tracking that monitors the “behavior” of an agent just as they would monitor a human user. By assigning unique identifiers and rigorous authentication steps to AI processes, security teams can better track where data is flowing and who—or what—is accessing it. This level of granularity is essential for maintaining a zero-trust architecture where every action, whether taken by a person or a machine, is verified and logged for potential review.

Establishing Structural Accountability and Actionable Governance

The transition toward an AI-driven corporate ecosystem eventually reached a point where technical visibility alone was insufficient for maintaining safety. Security leaders recognized that the primary hurdle in modern defense was not merely the lack of tools, but the lack of clear ownership and structural accountability for autonomous actions. Moving from 2026 to 2028, the industry shifted its focus toward “actionability,” which required understanding the organizational context of every AI tool to prioritize resources based on real-world risk. This evolution demanded that companies define who was responsible when an autonomous agent made a critical error or caused a security breach. It was determined that governing dynamic, intelligent entities required a departure from managing static IT assets toward a more holistic model of digital governance. Organizations that successfully integrated these coworkers established clear lines of authority, ensuring that every AI-driven decision remained within the bounds of corporate policy. This proactive stance allowed businesses to harness the efficiency of automation while mitigating the inherent unpredictability of probabilistic systems.