The digital landscape is witnessing a seismic shift as the once fabled titans of silicon and light recalibrate their massive ambitions across the European continent. This movement represents more than a simple corporate pivot; it signals a fundamental change in how the world approaches the construction of artificial intelligence gigafactories. These high density data centers have evolved into the primary battleground for technological supremacy, serving as the essential infrastructure required to host the next generation of large scale models.

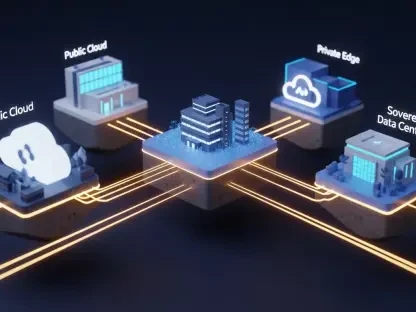

The current atmosphere is defined by a frantic drive toward sovereign compute power, where regional independence depends on the proximity of high performance hardware. This shift has forced a complex interplay between traditional hyperscalers, innovative neocloud providers, and the AI developers who rely on them. As national governments recognize the economic weight of these facilities, the race to secure GPU driven infrastructure has become a matter of digital survival rather than mere corporate expansion.

Shifting Paradigms in European Data Center Development

From Independent Gigafactories to Consolidated Hyperscaler Partnerships

The transition from the independent Stargate model to a more consolidated structure marks a strategic turning point for OpenAI. Rather than pursuing direct branded management of European facilities, the organization has opted for indirect involvement through its partnership with Microsoft. This change reflects an absorption of specialized infrastructure by firms like Nscale and Aker, which have taken on the operational heavy lifting once envisioned as a direct joint venture.

Prioritizing this partnership over independent management allows for a focus on software development while leaving the complex facility logistics to established cloud experts. By stepping back from the front line of data center ownership, the organization avoids the administrative friction associated with European infrastructure. This move toward consolidation suggests that even the largest players find the burdens of independent facility management to be a secondary priority compared to raw compute access.

Quantifying the Hardware Shift and European Capacity Benchmarks

Tracking the deployment of Nvidia Rubin GPUs reveals the sheer scale of the Narvik site, which currently stands at 230MW of potential capacity. Although this facility was initially expected to be a flagship for independent AI operations, the operational timeline extending to 2027 has seen a change in tenancy. Microsoft is now poised to utilize a significant portion of this hardware, claiming over 30,000 GPUs to support a wide range of regional AI workloads.

This hardware shift serves as a benchmark for how European capacity is being redistributed among legacy tech giants. As these corporations claim vacant space in high density centers, the dream of independent sovereign compute platforms faces a new reality. The consolidation of these resources into existing cloud ecosystems ensures that while the hardware remains on European soil, the control remains within established corporate frameworks.

Strategic Bottlenecks: Energy Costs and Resource Scarcity

The impact of prohibitively high energy pricing in the United Kingdom and across the continent has acted as a significant deterrent for massive infrastructure projects. Managing a site that requires hundreds of megawatts of power is becoming increasingly difficult in a market where utility costs fluctuate wildly. These financial pressures have forced a reassessment of the viability of large scale independent projects, pushing developers toward regions with more stable or subsidized energy grids.

Beyond the cost of power, the logistical complexity of procuring over 100,000 advanced GPUs presents a formidable barrier to entry. Hardware availability remains tight, and the operational risks of managing such a vast array of equipment independently are often too great for a single organization to bear. Leveraging established cloud ecosystems provides a buffer against these supply chain shocks and operational failures, making the hyperscaler model far more attractive.

Navigating the Complexities of European Digital Sovereignty and Regulation

Compliance with the evolving regulatory environment in the United Kingdom and the European Union has added another layer of difficulty to infrastructure planning. Data residency requirements and strict environmental standards necessitate a localized approach that can be difficult to scale quickly. These hurdles regarding local power grid integration and sustainability mandates have slowed the momentum of ambitious projects that were initially designed for rapid deployment.

Sovereign compute mandates are also shaping how American firms approach their expansion into the region. To meet the demands of local governments, companies must ensure that data remains within specific geographic boundaries while adhering to local carbon footprint goals. This regulatory landscape has made the path of least resistance one of partnership rather than direct competition with local infrastructure providers who already understand the nuances of the market.

The Future of AI Infrastructure: Consolidation vs. Direct Expansion

As Google and Microsoft move to fill the vacuum left by the original Stargate vision, the next phase of growth appears to be one of consolidation. The rise of the neocloud, led by specialized companies like Nscale, indicates that a new middle tier of infrastructure is becoming the backbone of European AI. These firms provide the specialized cooling and power management required for high density chips, acting as a bridge between the chip makers and the software developers.

Future market disruptors may find that high operational costs create a permanent barrier, leaving the market in the hands of a few dominant giants. This consolidation of power suggests that the era of independent gigafactories may be replaced by a more modular and collaborative approach to infrastructure. The shift implies that the physical location of the compute is less important than the efficiency of the partnership that delivers it.

Assessing the Long-Term Implications of OpenAI’s Strategic Pivot

The strategic retreat from direct European branding functioned as a pragmatic move toward operational efficiency over ideological independence. Stakeholders and investors observed that the complexities of high cost energy markets demanded a more flexible approach to infrastructure. This shift indicated that the initial vision of a monolithic, branded network was perhaps too rigid for the volatile economic landscape of the continent.

Investors who monitored these developments learned that success in the European market required a deep integration with local partners rather than an isolated expansion strategy. The pivot toward hyperscaler reliance suggested that the future of large scale AI capacity would be defined by shared resources and modular growth. These insights provided a roadmap for navigating the changing landscape of global compute power, where adaptability became the most valuable asset for long term sustainability.