Capital flooded into AI-ready clouds as enterprises rushed to modernize data, build generative interfaces, and wire up decision systems that move from batch analytics to real-time inference across apps, workflows, and edge endpoints without pausing to consider old procurement cycles or legacy constraints. That stampede redefined what “hyperscale” means: not just raw compute, but end-to-end stacks of chips, networks, storage, models, governance, and managed services delivered with enterprise-grade commitments on security, latency, and uptime. With buyers standardizing on multi-cloud architectures, the debate shifted from simple market share tallies to harder questions: who can translate surging AI interest into recurring revenue, who can fund the buildout without crushing margins, and who can price, package, and support AI in ways that survive procurement scrutiny after the proof-of-concept glow fades.

The Cloud AI Backdrop

Cloud infrastructure ended last year on a high note, reaching about $119 billion in Q4 and growing 30% year over year as AI workloads drove fresh capacity needs and new data pipelines. Early readings this year put AWS near 31% share, Azure at roughly 24%, and Google Cloud around 12%, with the trio controlling close to two-thirds of global spend. That concentration did not cap growth; it channeled it. Enterprises pushed sensitive data into governed lakes and warehouses, staged model training on specialized silicon, and spun up inference endpoints behind APIs. With generative AI expanding from copilots in productivity suites to vertical apps in healthcare, finance, and industrial IoT, double-digit growth forecasts looked less like optimism and more like a baseline tied to power, chips, and real estate.

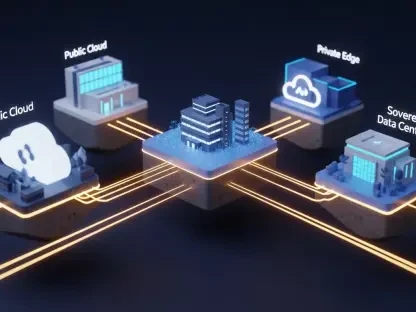

Building on this foundation, the AI wave favored providers that blurred lines between infrastructure and software. The winners bundled turnkey observability, vector databases, data cataloging, and security posture management alongside base compute, then layered managed services for retrieval-augmented generation and fine-tuning on top. Multi-cloud hardened as the default posture—roughly nine in ten large organizations avoided single-vendor bets—yet that did not equal commoditization. Differentiation showed up in the frictions customers did not feel: faster model deployment cycles, predictable egress for data-intensive analytics, and streamlined governance audits. In practice, that meant workloads often landed where data gravity, tool familiarity, and contractual commitments aligned, not where list prices were lowest.

Earnings Scorecard And Momentum

Execution through late April left a clear paper trail. Microsoft’s “Azure and other cloud services” advanced 39% year over year in the fiscal quarter ended December, folding into $51.5 billion in overall cloud revenue and supported by an estimated $625 billion in remaining performance obligations. The company pointed to strong AI consumption within Azure AI and early lift from Copilot seats across Microsoft 365 and developer tools. Amazon reported AWS growth reaccelerating to 24% in Q4, its best in over a dozen quarters, while asserting it added more absolute cloud revenue than rivals last year. Management spotlighted Bedrock adoption and traction for custom silicon—Trainium and Inferentia—where lower total cost of ownership nudged training and inference toward AWS.

Alphabet’s Google Cloud posted 48% growth in Q4 to $17.7 billion, its fastest clip in four years, and crucially turned an operating profit, easing skepticism about unit economics at scale. Backlog expanded, and commentary tied demand to BigQuery, Vertex AI, and TPU-based training for Gemini-class models and customer fine-tunes. Profitability mattered because it reframed Google Cloud from a growth project into a contributor with improving operating leverage. Across the board, backlog and committed spend became the tells investors watched most closely, since seat counts for copilots and AI platform usage can swing quarter to quarter. The early-year setup therefore hinged on whether guidance would show durable consumption, not just trial-fueled spikes, and whether margins could expand even as capital intensity rose.

Capex, Power, and Strategic Moats

The capex supercycle defined the competitive frontier. Amazon mapped roughly $200 billion this year to AI infrastructure, Alphabet targeted about $175 billion, and Microsoft accelerated spend tied to OpenAI integration and Azure AI capacity. That money did not go solely to GPUs and TPUs; it chased power procurement, substation partnerships, immersion cooling pilots, and greenfield campuses near fiber backbones. Power constraints emerged as a gating factor shaping where the next training clusters would live and how quickly inference could scale at the edge. Regulatory scrutiny and community pushback around water use, grid strain, and land availability added friction. The strategic question was not whether to spend, but how fast returns on that spend could show up in gross margin and free cash flow.

Strategy amplified those investments. AWS leaned on a vast catalog—200-plus services—plus Bedrock’s managed orchestration and its homegrown chips to drive efficiency at scale. Its startup loyalty and enterprise certifications across regulated industries gave it a wide funnel for AI pilots that graduate into production. Microsoft parlayed deep enterprise contracts, security stack integration, and hybrid cloud via Azure Arc to meet customers where data and compliance live, while embedding generative AI into everyday software through Copilot. Google Cloud differentiated with data-centric tooling: BigQuery for analytics gravity, Kubernetes leadership for portability, and TPUs for competitive training economics, all wrapped by Vertex AI and Gemini. Each moat suggested a path to monetization, but also a constraint—AWS’s conglomerate mix can mute consolidated margins, Microsoft faces near-term cost curves, and Alphabet still rides an ad-heavy base.

Valuation, Portfolio Fit, and What to Watch

Valuation set the investor calculus. Microsoft near $424 traded around 31x forward earnings, a premium driven by software-led durability, entrenched enterprise ties, and a modest dividend near 0.8%. Amazon around $264 sat closer to 28x, reflecting the blended profile of retail, ads, and cloud, yet offering leverage if AWS reacceleration endured. Alphabet near $344 had rallied on improving cloud profitability, but consensus upside trailed Microsoft’s given advertising’s outsized weight. Portfolio fit followed naturally: growth-tilted investors gravitated to Alphabet’s steeper cloud curve and AI-first posture; value-leaning buyers saw Amazon as scale-plus-discount with AI catalysts; quality seekers favored Microsoft’s visibility, hybrid reach, and cross-stack AI embedding. The common thread was discipline—capex efficiency, backlog quality, and margin trajectory.

The near-term checklist had been straightforward and practical. Investors rotated playbooks to favor names disclosing clearer AI revenue, detailing custom silicon roadmaps, and quantifying power procurement and data center ramp cadence. Hedging against capex surprises and power delays had been sensible, as was modeling multi-cloud persistence rather than lock-in. For selection, the balanced single-stock choice remained Microsoft given RPO strength and embedded AI monetization, while Amazon fit those prioritizing scale at a lower multiple and Alphabet suited those targeting higher-growth AI exposure from a smaller base. Across the trio, durable outperformance hinged on turning AI interest into contracted, usage-based revenue while bending cost curves on chips, power, and operations; the investors acting on those signals had positioned portfolios for the next leg of compound gains.