As a veteran authority in cloud technology and enterprise tech stacks, Maryanne Baines has spent years navigating the complex intersection of infrastructure and industry-specific software applications. Her deep experience evaluating major cloud providers positions her perfectly to analyze the shift toward more restrictive data access policies in the enterprise world. Today, she shares her insights on how these policy changes influence the delicate balance between system security, developer freedom, and the competitive landscape of third-party AI tools.

This conversation explores the technical hurdles created by endorsed architectures, the operational risks of relying on undocumented interfaces, and the strategic trade-offs of modern API governance.

Restrictions have been introduced regarding APIs interacting with autonomous AI systems that plan or execute call sequences outside specific endorsed architectures. How does this policy impact the integration of third-party generative AI tools, and what technical hurdles do developers face when trying to operate within these predefined pathways?

This policy creates a significant barrier for organizations looking to leverage best-of-breed generative AI tools that sit outside a vendor’s “endorsed” ecosystem. By prohibiting (semi-) autonomous systems from planning or executing sequences of API calls unless they follow specific pathways, the vendor effectively dictates the architectural logic of the customer’s entire AI strategy. Developers are now hitting a wall where they can’t simply point an agentic AI at an API and let it perform complex tasks like multi-step data orchestration or automated reporting. Instead, they must navigate a maze of “service-specific pathways,” which often feel like a digital straitjacket that limits the creativity and efficiency of modern AI agents.

Given the delays in publishing updated API documentation and templates, how can organizations maintain custom applications that currently rely on undocumented interfaces? What are the long-term risks regarding vendor control and the potential throttling of data access if developers are forced to use only “official” lists?

The reality on the ground is that many developers have historically relied on undocumented APIs because the official documentation and templates are often published too slowly to keep up with business needs. If organizations are suddenly forced to abandon these interfaces, they face a “development freeze” where existing custom applications could break or lose functionality overnight. The long-term risk here is absolute vendor lock-in, where the provider can monitor, govern, and throttle any development that doesn’t fit their commercial roadmap. When a vendor controls the “official” list of access points, they essentially own the faucet, deciding exactly how much data flows to your custom-built innovations and at what speed.

Enterprise systems now face constant automated probing from AI-driven agents, which makes tighter security a necessity. How do you balance the need for robust protection against unauthorized data extraction with the requirement for open integration, and what are the operational trade-offs of treating loose integration patterns as vulnerabilities?

Finding a balance is incredibly difficult because enterprise systems are no longer tested occasionally; they are being probed by AI-driven agents continuously. We have to recognize that loose integration patterns, while flexible, are legitimate vulnerabilities that can be exploited for large-scale data harvesting far faster than any human operator could manage. However, the trade-off is that by tightening these screws, you often choke off the very interoperability that makes a cloud platform valuable. Treating every non-standard integration as a security threat might protect the perimeter, but it can also turn an agile enterprise environment into a siloed fortress where data becomes trapped and stagnant.

While data ownership remains with the customer, high-volume API requests can lead to system performance issues and mandatory throttling. How should firms design their data extraction strategies to avoid these bottlenecks, and what does this mean for the actual cost of scaling AI agents on enterprise platforms?

To avoid being hit by mandatory throttling, firms need to move away from “brute force” data extraction and toward more intelligent, incremental synchronization patterns. When you have millions of calls hitting an API, the system performance on the application side degrades rapidly, forcing the vendor to step in and limit access to protect stability. This adds a hidden layer of cost to scaling AI agents because you can no longer assume “free” access to your own data in a high-velocity environment. Companies must now invest more in middleware and data orchestration layers to cache and manage requests, effectively paying a “complexity tax” just to keep their AI systems fed and functional.

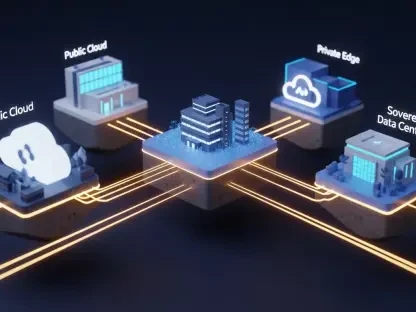

As major enterprise software providers shift their data access policies, how does this affect the competitive landscape for third-party partners? In what ways could restrictive policies drive customers toward alternative platforms that offer more flexible integration for their proprietary data and autonomous workflows?

Restrictive policies create an uneven playing field where the vendor’s own AI tools have “fast-pass” access while third-party partners are stuck in the slow lane or blocked entirely. This perceived “lock-out” is causing a lot of friction in the partner community, as it limits their ability to offer competitive, innovative solutions to shared customers. If these barriers become too high, we will likely see a migration toward alternative, more “open” platforms that treat data liquidity as a core feature rather than a liability. Customers are increasingly wary of being “locked in,” and they will vote with their budgets by choosing ecosystems that allow them to use their proprietary data wherever and however they see fit.

What is your forecast for the future of enterprise API ecosystems and AI integration?

My forecast is that we are entering an era of “Gatekeeper Governance,” where the tension between open platforms and proprietary protection will reach a breaking point. Over the next few years, I expect to see a surge in the adoption of standardized “Data Contracts” that attempt to bridge the gap between vendor security needs and customer autonomy. However, the providers who refuse to modernize their documentation speed and who use throttling as a competitive weapon will likely lose market share to more flexible, cloud-native challengers. Ultimately, the winners in the enterprise space will be those who figure out how to secure the platform without stifling the very AI-driven innovation their customers are desperate to implement.