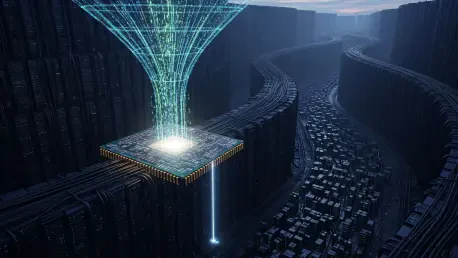

The breakneck speed of silicon innovation has finally outpaced the physical wires connecting the world, creating a paradox where the most powerful chips ever designed sit idle while waiting for data to arrive. As the global race for artificial intelligence supremacy intensifies, the conversation has largely centered on the scarcity of high-end GPUs and the staggering power requirements of massive data centers. However, a silent crisis is emerging beneath the surface: the inadequacy of the networking infrastructure designed to connect these components. While organizations are pouring billions into raw processing power, the “pipes” intended to transport the vast quantities of data required for training and inference are beginning to burst. This analysis explores why the network has transitioned from a background utility to the primary bottleneck for AI growth and why failing to address this gap could render the most sophisticated investments obsolete.

The Evolution: From Crypto Mining to AI Infrastructure

The current infrastructure landscape is shaped by a rapid and somewhat chaotic transition that has redefined the utility of the modern data center. Following the decline of the cryptocurrency boom, many specialized providers—now referred to as “neoclouds”—pivoted their massive GPU farms toward AI workloads. These companies originally built their foundations on architectures suited for crypto mining or content delivery, where the demands on the network backbone were relatively linear and predictable. In those environments, a simple connection was often sufficient to maintain operations.

However, the requirements for large language models and generative AI are fundamentally different from any previous digital workload. Historically, networking was treated as “plumbing”—a commodity that functioned reliably in the background without much strategic oversight. In the modern era, the historical focus on simple connectivity has left a legacy of rigid, low-resilience backbones that are now struggling to support the high-concurrency, low-latency demands of distributed AI clusters. This architectural mismatch is now becoming a visible friction point for scaling intelligence.

The Neocloud Infrastructure Gap: Reaching the Performance Ceiling

The Disparity: Compute Capacity versus Connectivity

One of the most pressing issues in the current market is the significant gap between raw GPU availability and actual networking throughput. Many neocloud providers have successfully secured the latest hardware from manufacturers, yet their internal networking capabilities remain rudimentary. Research suggests that while these providers can offer immense compute power, their backbones often lack the sophisticated fabric required for multi-node synchronization. Without high-speed, low-latency interconnects, GPUs spend a disproportionate amount of time waiting for data to arrive, leading to “compute stalls” that drain efficiency.

The Shift: From Static Plumbing to an Intelligent Nervous System

To survive the AI era, industry leaders argue that the network must undergo a fundamental transformation, moving away from being “background plumbing” and toward becoming a “nervous system.” Unlike traditional web traffic, which is bursty and human-driven, AI traffic is increasingly dominated by autonomous agents and bots. In fact, reports indicate that automated traffic now accounts for over half of all internet transmissions. These AI agents operate continuously, requiring a network that can dynamically coordinate data movement between diverse clouds and edge locations.

Regional Sovereignty: The Complexity of Global Data Movement

Beyond technical throughput, the bottleneck for AI growth is further complicated by geopolitical and regulatory pressures. As AI workloads become more distributed, the network must account for data sovereignty laws that vary by region. Moving petabytes of training data across borders introduces significant legal risks and security vulnerabilities. Many existing network infrastructures were not built with the end-to-end encryption or the granular routing controls necessary to satisfy modern compliance standards. This complexity often forces organizations to keep data siloed, which limits the scale of their models.

Future Trends: Adaptive and Consumption-Based Networking

Looking ahead, the networking industry is poised for a radical transformation characterized by dynamic scalability and software-defined architectures. The next generation of networking will likely mirror the cloud’s consumption-based model, allowing companies to scale bandwidth up or down in real-time based on the specific needs of an AI training run. We can expect to see a rise in “programmable fabrics” that allow AI architects to define network paths via code, optimizing for latency or cost on the fly without manual hardware intervention.

Furthermore, as AI at the edge becomes more prevalent, the integration of high-speed wireless and satellite connectivity into a unified AI network will become a standard requirement. Experts predict that the providers who succeed will be those who treat connectivity not as a static asset, but as a flexible, high-performance service. This shift toward “as-a-service” networking will allow smaller firms to compete with tech giants by providing them with the same level of infrastructure agility previously reserved for the world’s largest data centers.

Strategic Recommendations: Building an AI-Ready Infrastructure

For businesses and IT leaders, the primary takeaway is that a GPU-first strategy is no longer sufficient to guarantee a return on investment. To avoid the networking bottleneck, organizations had to adopt a more holistic approach to their infrastructure investments.

- Audit Provider Backbones: Selecting a cloud or GPU-as-a-service provider required looking beyond the hardware specs. Investigating network architecture for evidence of high-speed interconnects and low-latency resilience became a mandatory step.

- Prioritize Scalability: Investing in network solutions that offered API-driven control and consumption-based pricing ensured the infrastructure grew alongside the project.

- Integrate Security Early: Treating network security as an integral component rather than an afterthought allowed for better protection of intellectual property and simplified compliance with global regulations.

- Evaluate Total Cost of Ownership: Considering the hidden costs of network latency revealed that slow data movement significantly increased the time and money required to train models.

Bridging the Gap: Long-Term Success in a Connected Era

The artificial intelligence revolution reached a crossroads where the brilliance of software was hindered by the limitations of hardware connectivity. As evidenced by the market shifts, the “weak network” became the critical constraint that threatened to stall innovation and diminish the return on massive AI investments. By reimagining the network as a dynamic nervous system rather than a static utility, and by prioritizing infrastructure resilience, organizations unlocked the full potential of their compute power. Ultimately, the leaders of the AI era were not just those with the fastest processors, but those who recognized that a brain is only as effective as the nerves that carry its signals. Moving forward, the focus shifted toward building interconnected ecosystems where data moved as fast as the thoughts it generated.