Maryanne Baines is a distinguished authority in cloud technology and cybersecurity infrastructure, renowned for her deep-dive evaluations of enterprise tech stacks and their real-world applications. With a career built on bridging the gap between complex software vulnerabilities and scalable business solutions, she has spent years helping organizations navigate the shifting sands of digital defense. Today, she shares her insights on the transformative potential of specialized AI security tools, focusing on the recent evolution of automated vulnerability management and the delicate balance between autonomous patching and human oversight.

The following discussion explores the integration of AI into the DevSecOps lifecycle, examining how tools can now move from mere detection to active remediation within a single session. We delve into the mechanics of confidence-based prioritization to combat alert fatigue, the logistical hurdles of embedding security data into platforms like Jira and Slack, and the ethical considerations surrounding high-capability models that can simulate multi-step network attacks.

Moving from vulnerability detection to automated patching often creates friction in enterprise environments. How does a single-session workflow from repository scanning to code repair change the daily routine for security engineers, and what specific validation steps are necessary before deploying these AI-generated fixes?

The shift to a single-session workflow fundamentally alters the rhythm of a security engineer’s day by collapsing the time-consuming bridge between identifying a flaw and drafting a solution. Historically, an engineer might spend hours jumping between a scanner and their IDE, but with a single-click scan that analyzes component relationships and data usage, that friction begins to evaporate. The process starts with a full repository scan to flag potential flaws, followed immediately by the tool opening a code environment to suggest a specific patch. For validation, engineers must first review the reproduction steps provided by the AI to ensure the vulnerability is not a ghost in the machine. Then, they need to scrutinize the AI’s justification for the severity level and its confidence score before running the generated fix through a standard CI/CD staging environment to ensure no regressions occur.

Security tools often struggle with “alert fatigue” caused by false positives. How does attaching a specific confidence percentage to a vulnerability report impact the prioritization of remediation tasks, and what metrics should teams track to verify the accuracy of these AI-driven justifications?

Attaching a confidence percentage transforms a chaotic list of alerts into a disciplined, risk-based roadmap that respects a developer’s limited time. When a tool flags a vulnerability with a high confidence rating, it provides an emotional sigh of relief for the team because they know they aren’t chasing a “false positive” phantom. To verify this accuracy, teams should track the “conversion rate” of AI-flagged vulnerabilities into accepted patches, as well as the frequency of human overrides on AI-assigned severity levels. We saw feedback from hundreds of firms indicating that this nuanced approach—balancing findings with a probability of legitimacy—allows senior executives to focus on high-quality, novel findings rather than getting bogged down in noise. It’s about moving away from the “crying wolf” syndrome that has plagued traditional security scanners for decades.

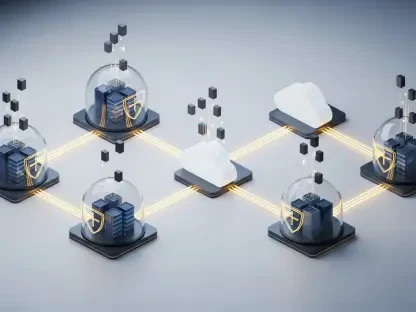

Scaling security across large organizations requires seamless data movement into existing project management tools. When exporting vulnerability data to platforms like Jira or Slack, how should teams structure their response workflows, and what are the best practices for managing scheduled scans without disrupting development cycles?

To scale effectively, the workflow must be invisible until it is actionable, ensuring that security data flows into Jira or Slack as structured, prioritized tasks rather than just more unread notifications. Best practices involve setting up targeted scans that focus on specific, high-risk components during peak development hours, while reserving full repository scheduled scans for off-peak times to minimize performance hits. When a vulnerability is exported, it should arrive with Markdown or CSV attachments that include the AI’s reproduction steps, allowing a developer to see the “why” and “how” without leaving their primary workspace. This integration ensures that security becomes a background process that surfaces only when a human decision is required, which is a significant leap forward from the days of manual reporting. By providing clear justifications and severity metrics, the AI helps bridge the gap between the security department and the development team.

Advanced AI models are now capable of executing complex, multi-step network attack simulations. Given the risk of dual-use capabilities, how do embedded guardrails function to prevent malicious exploitation while still allowing legitimate security teams to identify novel flaws, and where should the line be drawn for public access?

The tension between defensive utility and offensive risk is palpable, especially when a model can autonomously execute a 32-step enterprise network attack simulation. Embedded cyber guardrails function as a set of digital constraints that prevent the model from engaging in high-risk tasks that lean toward exploitation rather than remediation. We have seen a commitment to “gating” these capabilities, where the most powerful models are reserved for select partners under research previews rather than being released to the general public. This cautious approach is necessary because, while a model might breach a weakly defended system in a simulation, the lack of proactive human defenders in those environments makes it a “dual-use” wild card. The line for public access must be drawn at the point where the AI moves from identifying a flaw to providing a weaponized, end-to-end exploit script that requires no human intervention.

Beyond simple pattern matching, modern analysis involves examining the intricate relationships between various code components and data usage. Can you walk through the process of how an AI interprets the viability of source code during a scan and what specific indicators suggest a vulnerability is truly exploitable?

Modern AI interpretation goes far beyond looking for a “bad” string of text; it maps the flow of data across the entire architecture to see if a vulnerability is actually reachable. The process begins by tracing the relationships between components to determine if an untrusted input can reach a sensitive sink, such as a database query or a system command. An AI looks for specific indicators like a lack of input validation or improper state management that suggests a flaw isn’t just a theoretical error but a “viable” exploit path. It assesses the context—looking at how data is used in practice—to decide if a potential bug is truly a high-quality finding that could affect the customer environment. This sensory-like understanding of code logic allows the tool to provide reproductions that feel less like a checklist and more like a detailed forensic report.

What is your forecast for AI-driven vulnerability management?

I expect to see a total convergence of the “detect” and “fix” phases, where the role of the security engineer shifts from a manual investigator to a high-level orchestrator of autonomous systems. Within the next few years, the standard for enterprise security will be models that not only identify novel flaws but also proactively defend against 32-step attack chains in real-time, working alongside human defenders to neutralize threats before they can be felt. We are moving toward a future where 700+ senior executives will no longer fear the volume of threats, but rather trust in the high-confidence, automated remediation cycles that keep their environments resilient and their customers safe.